Archiving tweets without IFTTT

July 22, 2012 at 8:38 PM by Dr. Drang

Here’s a final bit of follow-up on the flurry of posts about Twitter and archiving tweets that I wrote early in the month. The way I left it, I was using the IFTTT web service to append new tweets to a text file in my Dropbox folder. Since I have no control over IFTTT, my preference would be to use my own archiving script sitting on my own hard disk. Today I wrote that script.

Plenty of people have written scripts to do this. I wrote my own because I wanted one written in a language (Python) and using a Twitter library (tweepy) with which I’m familiar. I used tweepy a while ago in a script that posts tweets with images. Tweepy is nice in that it automatically handles conversions between the pure text that the Twitter API deals in and the objects—integers and datetime objects, in particular—that Python understands and can manipulate directly.

Here’s the script, called archive-tweets.py:

python:

1: #!/usr/bin/python

2:

3: import tweepy

4: import pytz

5: import os

6:

7: # Twitter parameters.

8: me = 'drdrang'

9: consumerKey = 'abc'

10: consumerKeySecret = 'def'

11: accessToken = 'ghi'

12: accessTokenSecret = 'jkl'

13:

14: # Local file parameters.

15: urlprefix = 'http://twitter.com/%s/status/' % me

16: tweetdir = os.environ['HOME'] + '/Dropbox/twitter/'

17: tweetfile = tweetdir + 'twitter.txt'

18: idfile = tweetdir + 'lastID.txt'

19:

20: # Date/time parameters.

21: datefmt = '%B %-d, %Y at %-I:%M %p'

22: homeTZ = pytz.timezone('US/Central')

23: utc = pytz.utc

24:

25: # This function pretty much taken directly from a tweepy example.

26: def setup_api():

27: auth = tweepy.OAuthHandler(consumerKey, consumerKeySecret)

28: auth.set_access_token(accessToken, accessTokenSecret)

29: return tweepy.API(auth)

30:

31: # Authorize.

32: api = setup_api()

33:

34: # Get the ID of the last downloaded tweet.

35: with open(idfile, 'r') as f:

36: lastID = f.read().rstrip()

37:

38: # Collect all the tweets since the last one.

39: tweets = api.user_timeline(me, since_id=lastID)

40:

41: # Write them out to the twitter.txt file.

42: with open(tweetfile, 'a') as f:

43: for t in reversed(tweets):

44: ts = utc.localize(t.created_at).astimezone(homeTZ)

45: lines = ['',

46: t.text,

47: ts.strftime(datefmt),

48: urlprefix + t.id_str,

49: '- - - - -',

50: '']

51: f.write('\n'.join(lines).encode('utf8'))

52: lastID = t.id_str

53:

54: # Update the ID of the last downloaded tweet.

55: with open(idfile, 'w') as f:

56: lastID = f.write(lastID)

The first few sections define the parameters that control the way the script works. The Twitter parameters are my user name and the set of keys and secrets needed to interact with the Twitter API. The keys and secrets shown are obviously not the ones I really use. If you want to run your own version of this program, you’ll have to get your own keys and secrets from Twitter’s developer site. It’s not a big deal; I explained the steps in an earlier post.1

The local file parameters define where the tweet archive is, and the date/time parameters define how the dates will be formatted in the archive.

A key feature of the script is knowing how far back in my user timeline to look for new tweets. This is handled by storing the ID number of the last downloaded tweet in a file named lastID.txt, kept in the same folder as the archive file itself. When the script is run, Lines 35-36 open that file and read the number in it. This information is then used as the since_id parameter in the call to collect tweets from the user timeline in Line 39.

Update 7/24/12

As pointed out in the comments, I forgot to mention here that I took the ID of the last tweet in my archive—an archive I’d already built from tweets saved in ThinkUp—and used it to “seed” lastID.txt. In so doing, I avoided “file not found” errors.

Since the user timeline returns the tweets in reverse chronological order, and I want to add them to the archive in chronological order, I reverse the list of tweets at the top of the loop in Line 43. For each tweet, I get the time stamp, which is in UTC, and convert it to my local time. I then write out

- the text of the tweet;

- the date and time in an easy-to-read format;2

- the URL of the tweet; and

- a dividing line

to the end of archive file, which was opened for appending ('a') in Line 42.

As the script loops through the list of tweets, it updates the lastID variable on Line 52. This is then used to overwrite the old value in lastID.txt in Lines 55-56.

(As an aside, this is the first script I’ve written to use the with open() as idiom, first introduced in Python 2.5. I’m late to the party, but I like it.)

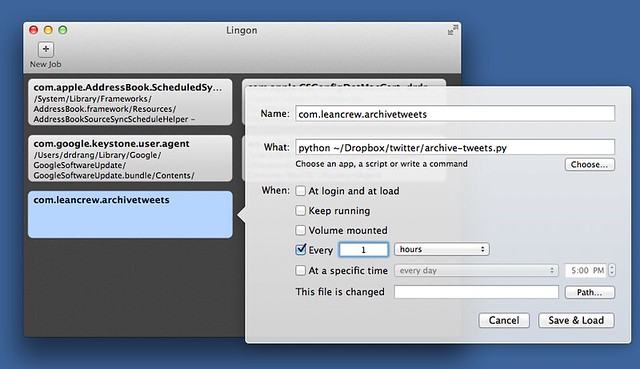

I certainly don’t want to run this script by hand, so I used Lingon 3 to create a Launch Agent that runs the script every hour on my work computer, a machine that’s always on.

Every hour is, I admit, extreme overkill. I’m only running it that often initially to test for bugs. In a day or two I’ll redefine the Launch Agent to run once a day.

The Launch Agent setup works only for Macs, but archive-tweets.py is a script that could run on any platform. If I were working on a Linux machine, I’d have its run times scheduled via cron. If I were working on Windows, I’d slit my throat and wouldn’t have to think about archiving tweets anymore.

Actually, unless I’ve screwed this script up, I can stay on OS X and still won’t have to think about archiving tweets anymore.

Update 7/22/12

The script seems to be working fine—it should, I did a fair amount of debugging and testing—but the Launch Agent started out wonky. First, using a script path of ~/Dropbox/twitter/archive-tweets.py caused problems. I should’ve known better than to use the tilde as an alias for my home directory. Going with a full, explicit path, /Users/drdrang/Dropbox/twitter/archive-tweets.py, eliminated some of the error messages.

Second, Lingon 3 added some self-identifying information to the plist file that launchd doesn’t like. The source code of com.leancrew.archivetweets.plist in my LaunchAgents folder was

xml:

1: <?xml version="1.0" encoding="UTF-8"?>

2: <!DOCTYPE plist PUBLIC "-//Apple//DTD PLIST 1.0//EN" "http://www.apple.com/DTDs/PropertyList-1.0.dtd">

3: <plist version="1.0">

4: <dict>

5: <key>Label</key>

6: <string>com.leancrew.archivetweets</string>

7: <key>LingonWhat</key>

8: <string>python /Users/drdrang/Dropbox/twitter/archive-tweets.py</string>

9: <key>ProgramArguments</key>

10: <array>

11: <string>python</string>

12: <string>/Users/drdrang/Dropbox/twitter/archive-tweets.py</string>

13: </array>

14: <key>StartInterval</key>

15: <integer>3600</integer>

16: </dict>

17: </plist>

The LingonWhat key in Line 7 was the source of this error:

7/22/12 9:43:52.900 PM com.apple.launchd.peruser.501: (com.leancrew.archivetweets) Unknown key: LingonWhat

Because that key/string pair doesn’t do anything, I just edited them out in TextMate, leading to

xml:

1: <?xml version="1.0" encoding="UTF-8"?>

2: <!DOCTYPE plist PUBLIC "-//Apple//DTD PLIST 1.0//EN" "http://www.apple.com/DTDs/PropertyList-1.0.dtd">

3: <plist version="1.0">

4: <dict>

5: <key>Label</key>

6: <string>com.leancrew.archivetweets</string>

7: <key>ProgramArguments</key>

8: <array>

9: <string>python</string>

10: <string>/Users/drdrang/Dropbox/twitter/archive-tweets.py</string>

11: </array>

12: <key>StartInterval</key>

13: <integer>3600</integer>

14: </dict>

15: </plist>

With that change, the launchd began running the script with no errors and new tweets started accumulating in my archive file.

I’m going to shoot an email to Lingon 3’s developer, Peter Borg, to let him know about this error and ask why he puts that extraneous key/string pair in there.

-

Because the script does nothing more than download publicly available tweets from the user’s timeline, I’m not certain that the keys and secrets are really necessary (as they are in a script that, for example, posts tweets). Because I already had a set of keys and secrets lying around from an earlier project, I just used those and didn’t explore any other possibilities. ↩

-

The date/time format is slightly different from the one used by the IFTTT recipe, which I had no control over, and my ThinkUp-to-archive script, which I wrote to match the IFTTT recipe’s output. Rather than have two different formats in my archive, I did some regex find-and-replaces to change the old formats to this new one. ↩