Shellhead

January 15, 2013 at 8:48 PM by Dr. Drang

One of the nice things about having a plain text archive of all my posts on my local machine is that I can learn more about my writing through the standard Unix toolset. Sometimes it takes a while to figure out the best tool for the job.

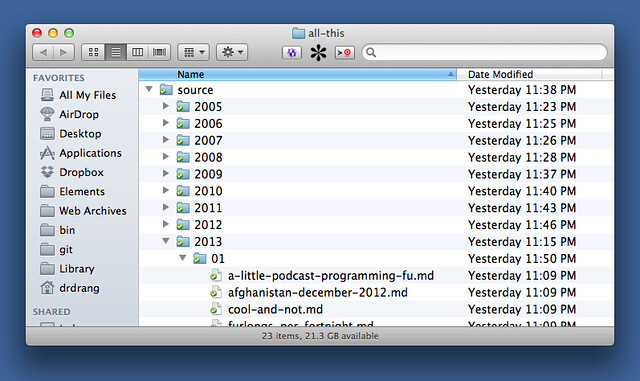

As I said last night, I now have the Markdown source of ANIAT in a set of files, one per post, in my Dropbox folder. Here’s the directory structure:

How many posts have I written? If I cd into the source directory, I can run

bash:

find . -name "*.md" | wc -l

Since all the file end in .md, the find command with the -name option seemed like the obvious solution. It’ll print out all the files below the current directory (.), one per line. Piping that output to wc with the -l (lines) option gives the count I was looking for—in this case, 1796. This isn’t the best way to get the number of posts, but it’s what I thought of first.

Find is a pretty complicated beast, one that I’ve never really tamed. But I do know how to do a few things with it. Once I had the number of files, I wondered how many words I’d written. I knew I could get the number of words in each file through

bash:

find . -name "*.md" -exec wc -w {} \;

The first part of this command, up through "*.md", generates the list of all my posts. The rest of the command executes wc on all of those files. The empty braces act as a sort of variable that holds the name of each file in turn, and the escaped semicolon tells -exec that its command is finished. You have to escape the semicolon with a backslash so that it gets handled by -exec instead of the shell.

The result is 1796 lines that look like this:

1170 ./2013/01/local-archive-of-wordpress-posts.md

788 ./2013/01/lucre.md

1289 ./2013/01/revisiting-castigliano-with-scipy.md

545 ./2013/01/textexpander-posix-paths-and-forgetfulness.md

144 ./2013/01/what-apples-working-on.md

Each line has the word count of a file followed by its pathname relative to the given directory. We want to add all those counts. Awk would be a natural tool for the summation because it splits lines on white space automatically, but I’m not very good with awk. I chose to use Perl, which has a awk-like autosplit command line option. Here’s the full command:

bash:

find . -name "*.md" -exec wc -w {} \; | perl -ane '$s+=$F[0];END{print $s}'

The -ane is a set of three switches ganged together that tell Perl to

- Read each line of standard input and apply the script to each line in turn (

n). - Split the line on whitespace and put the resulting list into the

@Farray (a). - Use the next item on the command line as the script (

e).

The $s variable springs to life already initialized to zero the first time it’s referenced. It acts as the accumulator of the word count of the individual files by repeatedly adding the first item ($F[0]) of each line to itself. The END construct, which prints the value of $s, is a special portion of the code that doesn’t get executed with each input line; it’s run only after all the input lines are processed. The output is 932041, so I’m heading toward a million words here.

This was fun, but I realized after getting the answer that find was overkill for this problem. Find is a good command to use when you have files at several (or unknown) levels in the directory hierarchy, but that’s not what I have. From the source directory, each Markdown file is exactly two levels below. I could have gotten the file count more easily by

bash:

ls */*/*.md | wc -l

Even better, when given a list of files, wc will return not only the counts for each file in the list, but also the cumulative counts in a final line at the bottom of the output. Thus,

bash:

wc -w */*/*.md

will return a long output that ends with

861 2013/01/google-lucky-links-in-bbedit.md

1170 2013/01/local-archive-of-wordpress-posts.md

788 2013/01/lucre.md

1289 2013/01/revisiting-castigliano-with-scipy.md

545 2013/01/textexpander-posix-paths-and-forgetfulness.md

144 2013/01/what-apples-working-on.md

932041 total

which gives the same total word count I got with the more complicated find/Perl pipeline. And if I didn’t want to clutter my Terminal window with the word counts for all 1796 individual files, I could’ve run

bash:

wc -w */*/*.md | tail -1

to get just the cumulative line.

If there’s a moral to this story, I guess it would be “think before you write,” although sometimes you can learn by doing things the wrong way.