My favorite Apple accessory

April 27, 2026 at 11:42 AM by Dr. Drang

I meant to write this last week, shortly after I finished listening to Episode 612 of Upgrade. But things didn’t work out the way I hoped, so now I’m rushing to finish these few paragraphs before this week’s episode comes out (they’re recording as I type). I have nothing to say about the Tim Cook/John Ternus news that hasn’t already been said. I want to focus on Jason and Myke’s choices—made and unmade—in their Apple at 50 Draft.

My favorite picks were the oddballs, the products that weren’t Macs, iPhones, iPads, iPods, or Apple IIs. In other words: the accessories. I was particularly pleased with Jason’s picks of the LaserWriter, the Apple Disk II, the Apple Watch Sport Band, and the second generation Apple Pencil. I confess I was a little disappointed in Myke’s choice of the first generation Pencil, but he more than made up for it by later choosing the Magic Trackpad.

Those of you who weren’t around in the 80s and 90s may think Jason went overboard in putting the LaserWriter in as his third pick, but you’d be wrong. It was both a great product and incredibly important to Apple. Similar comments apply to the Apple Disk II. I never had one—I never owned an Apple II—but I did have its successor, the Integrated Woz Machine, in all of my early Macs.

My oddball entry would have been the AirPort Express. This is not in the “I can’t believe you didn’t pick” category1 because it’s an oddball even among oddballs, but for a short period of time for a specific subset of users, it was a great accessory.

If you were a business traveler during those few years in the mid-00s when hotels had wired internet access in their rooms but hadn’t yet outfitted themselves with WiFi, the first generation AirPort Express was one of the best things you could pack. It was about the same size as the wall wart power supply that came with your Apple notebook, and it set up a little WiFi network that gave you the freedom to work (or play) anywhere in your room. Even after hotel WiFi became common, I still packed my AirPort Express because it gave me a faster and more reliable wireless network.

I should also mention that “AirPort” was one of Apple’s best product names. Too bad they don’t have any reason to bring it back.

-

I’m trying to avoid Jason’s wrath here. ↩

Krugman, Taylor, and Maclaurin

April 20, 2026 at 6:53 PM by Dr. Drang

In this morning’s blog/newsletter,1 Paul Krugman included a couple of unusual graphs that I want to talk about. It took me a little while—longer than it should have—to figure out what he was doing and why, and I’m still not sure I agree with his plotting choices. Let’s go through them and you can decide for yourselves.

The two graphs of interest are made the same way, so we’ll focus on the first one. He introduces it this way:

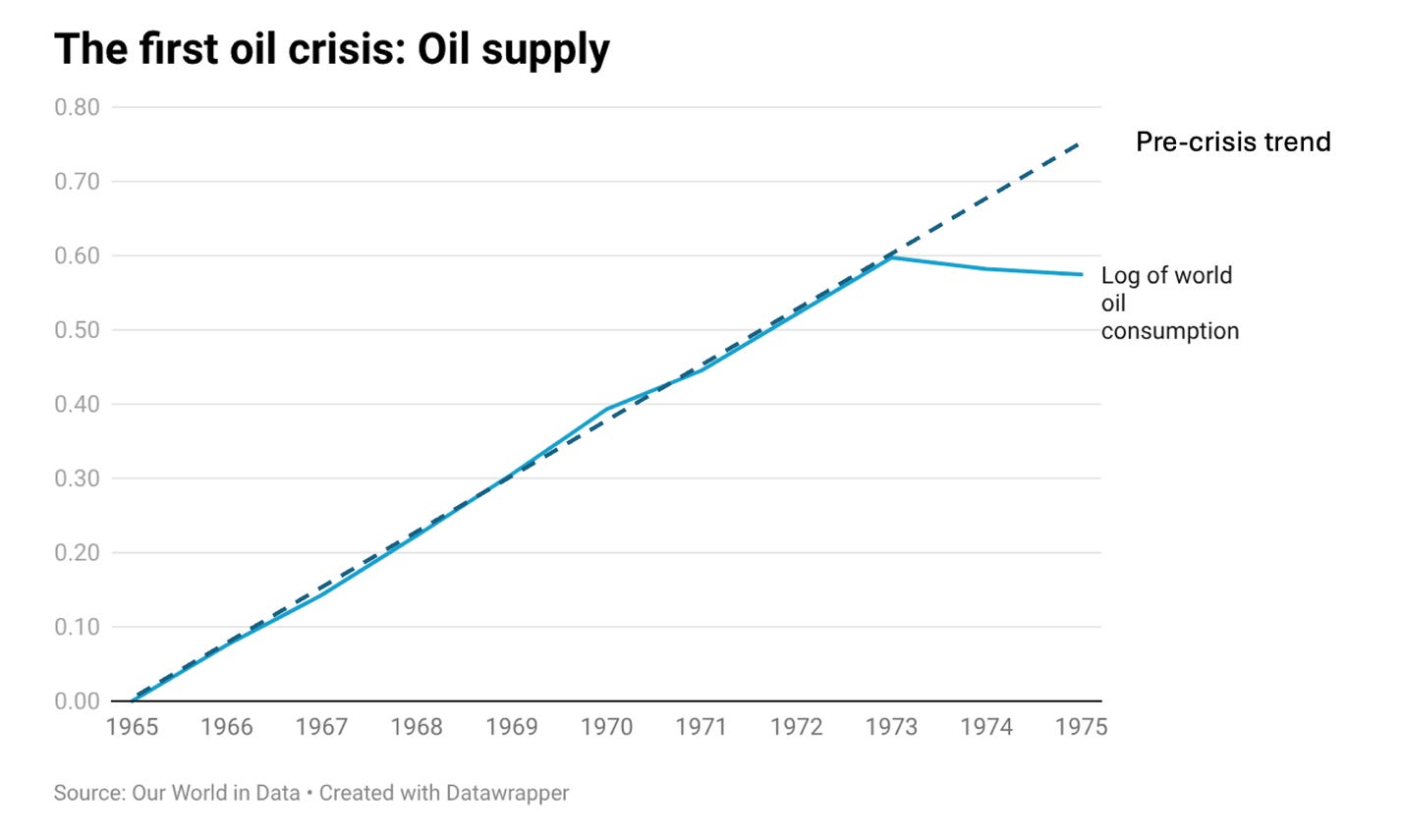

The closest parallel I know to the Hormuz crisis is the oil shock that followed the 1973 Yom Kippur War. (The 1979 Iran crisis was more complex, involving a lot of speculative price changes.) World oil supply fell only moderately after 1973, but it had been on a rapidly rising trend until then, so there was a large shortfall relative to that trend. In the chart below I show the natural log of world oil consumption with 1965 as the base year:

And here’s the graph itself:

Krugman usually apologizes in advance for the “wonky” parts of his posts, so I was surprised that he just breezed through the “natural log” and “base year” parts of his explanation. What he’s doing here is taking the oil consumption of a given year, dividing it by the consumption in 1965 (that’s the “base year” part), and then plotting the natural logarithm of that ratio against the year. The ratio for 1965 itself is, of course, one, which is why its log is zero.

Using logarithms to plot exponential growth is common because you end up with a straight line. What isn’t common is plotting the logarithm (natural or common) of the values on a linear scale, as Krugman does here. Plotting software typically (always?) offers you the option of plotting the actual values on a logarithmic scale. The advantage of taking that option is that although the ticks will be unevenly spread, the tick labels will represent those actual values, not their hard-to-interpret logarithms.

The interior of the plot would look the same if Krugman had used a log scale for the vertical axis. We’d still see the straight line showing exponential growth from 1965 through 1973, and then the very slight decay after that. The only difference would be the spacing and labeling of the horizontal grid lines.

So why did Krugman make the plot the way he did? I guess the answer lies in the paragraph after the plot:

The percentage difference between two numbers is approximately the difference in their natural logs times 100. So this chart shows that the world was burning approximately 17.5 percent less oil in 1975 than it would have under the pre-1973 trend — a supply shock not too different from what we will see now if the Strait remains closed.

The first sentence of this paragraph is what took me a while to understand. Then I remembered a Taylor series—actually a specialized Taylor series called a Maclaurin series—that I haven’t seen in quite a while.

Consider exponential growth at a yearly rate of . After t years, the value, relative to starting value, will be

(Note that is expressed as a decimal value. If the yearly growth is , .)

Taking the natural log of both sides of this equation, and using the properties of logarithms and exponents, gives us

where

(I should mention here that because I’m an engineer instead of a mathematician or programmer, I use for the natural logarithm instead of . I use for common [base 10] logarithms.)

This means that is the slope of the exponential growth portion of Krugman’s plot.

This is where the series expansion comes into play. As I nearly forgot, we can express this logarithmic term as

and if is small, the higher-order terms are very small, and

so the growth rate (as a decimal) will be about equal to the slope of Krugman’s graph. To get the growth rate as a percentage, multiply by 100.

Is small? Yes. For the eight-year period from 1965 to 1973, we see that the natural log of oil consumption goes up about . So the slope of that portion of the graph is

which is pretty small.

The thing is, I’m pretty sure that whole “take the difference of the natural logs and multiply by 100” thing seemed like hand-waving to most of Krugman’s readers. If he’d just used a log scale on the vertical axis, he could’ve said that the lost oil consumption was about , and it wouldn’t have seemed so magical because we’d all be able to see it in the graph.

Unless his purpose was to entertain people like me. In that case, good job!

-

I’m pretty sure Krugman thinks of it as a newsletter because it’s hosted on Substack. I think of it as a blog because I read it through RSS. ↩

Keyboard Maestro launchers

April 19, 2026 at 9:41 AM by Dr. Drang

During my seven-week Spotlight trial, I was reminded of how easy it is to make file and folder launchers in Keyboard Maestro. In case you’ve also forgotten, here’s a short post on how to do it.

There are three items that I open quite often and that Spotlight was slow to find:

- My

blog-stuffdirectory, which is where I keep all the text files, scripts, images, and other components that go into making blog posts. Each post, or set of posts, gets its own subdirectory inblog-stuff. - My notebook index file, which is where I keep a list of all the entries in my paper notebooks.

- My

calendricaldirectory, which is where I keep the code and support files for my current programming project: a Python module implementing the functions described in Reingold & Dershowitz’s Calendrical Calculations. This folder differs from the items above in that I won’t always need quick access to it, but I will until the project is finished.

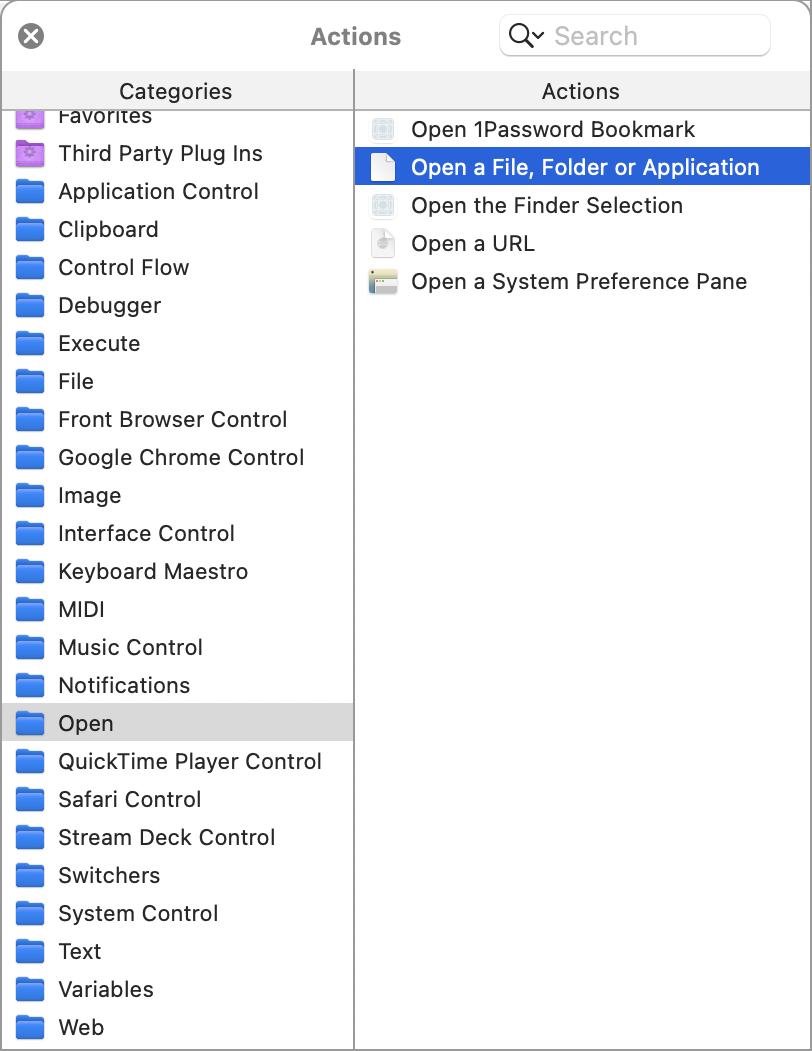

The key to making a Keyboard Maestro macro to instantly launch a file or folder is the action in the category. Here’s where you’ll find it in the Actions panel.

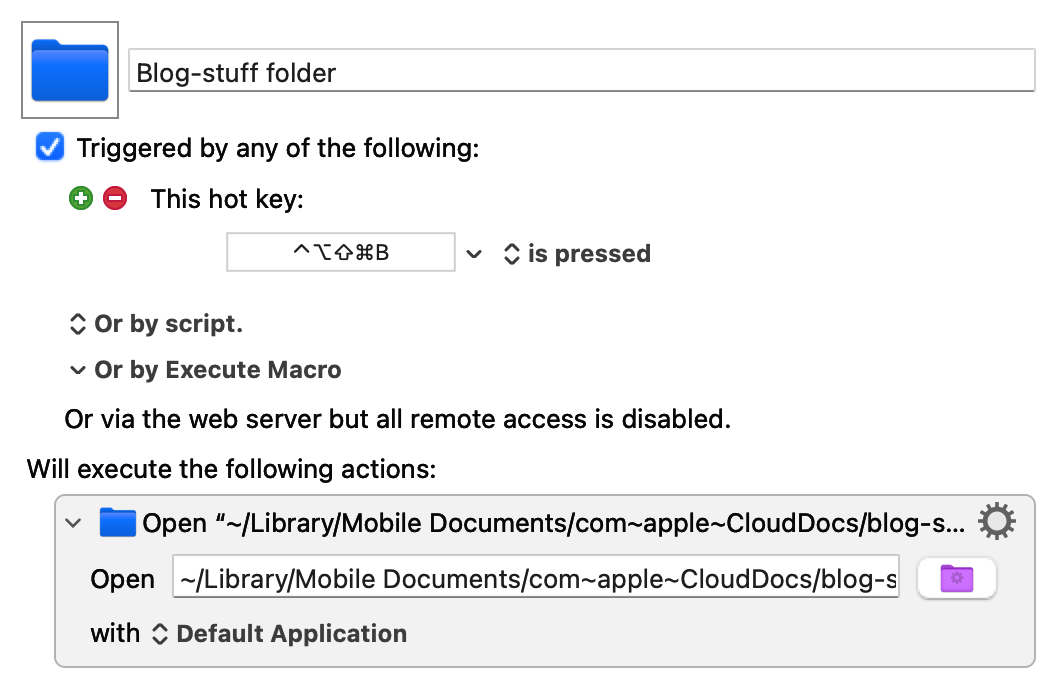

The macros for opening the blog-stuff and calendrical folders in the Finder are one-step macros that look like this:

The path to the blog-stuff folder is

~/Library/Mobile Documents/com~apple~CloudDocs/blog-stuff

The calendrical macro looks the same, except it uses the keyboard shortcut ⌃⌥⇧⌘C and opens

~/Library/Mobile Documents/com~apple~CloudDocs/programming/calendrical

(These keyboard shortcuts have the sort of complicated chording I’d never use if I weren’t running Karabiner Elements to turn Caps Lock into a “Hyper” key that mimics pressing ⌃⌥⇧⌘ simultaneously. If you read Brett Terpstra, you’ll recognize the Hyper key.)

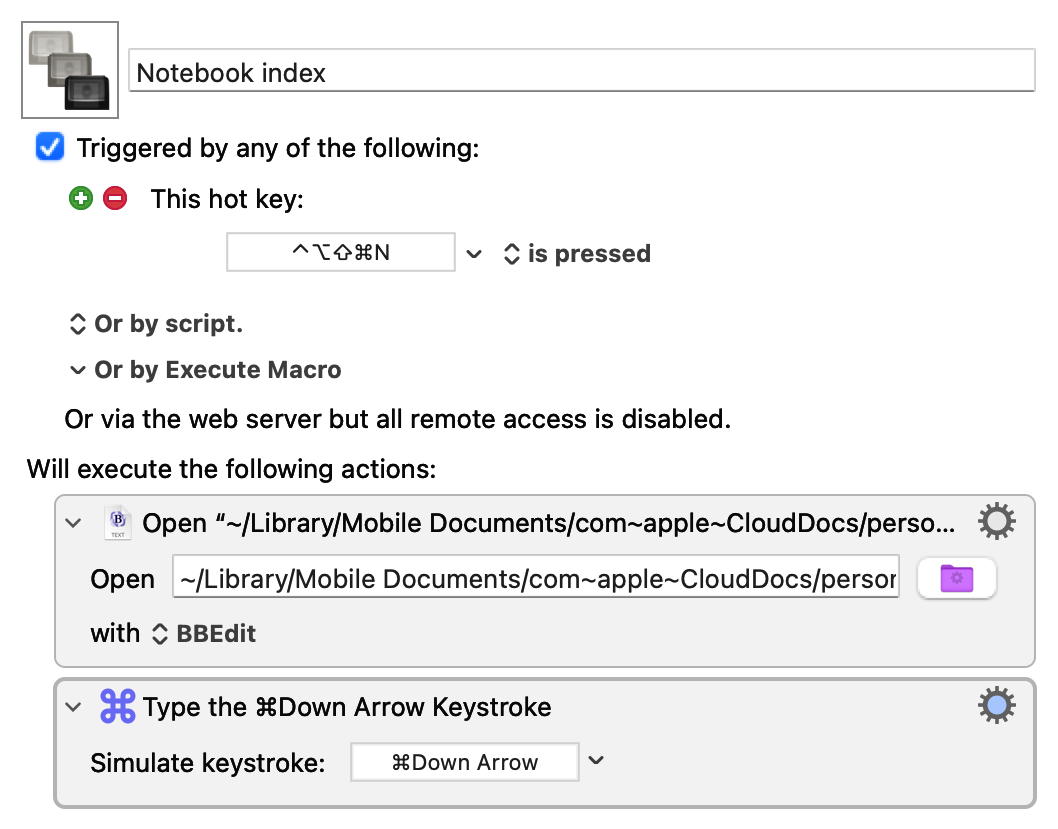

The macro for opening the notebook index file is only slightly more complicated:

The path to the file is

~/Library/Mobile Documents/com~apple~CloudDocs/personal/Notebook index.txt

and the second step puts the cursor at the bottom of the file, which is usually where I want it, as I’m typically opening the index to add a new entry.

Even though I’m back with LaunchBar, and I can use it to get to these files and folders quickly, I’m keeping the macros. They’re not that much faster than ⌘-Space and typing a few characters, but they’re more accurate. There’s no risk of typing “cla” instead of “cal” when I want to open the calendrical folder.

Launchers and me

April 18, 2026 at 11:13 AM by Dr. Drang

I started using launchers shortly after returning to the Mac (from Linux) in 2005. The first one I used was the great Quicksilver. I’m sure I learned about it from Merlin Mann, who was Quicksilver’s biggest advocate, but I can’t point to which of his many posts on QS got me started.

When Nicholas Jitkoff (Alcor) stopped developing Quicksilver in 2007 or so, I switched to LaunchBar, and that’s been my main launcher ever since.1 I gave Alfred a workout for a few months—inspired, I think, by this episode of Mac Power Users—and I’ve tried Spotlight a few times, but I’ve always returned to LaunchBar.

My most recent trial of Spotlight began in late February and ended yesterday. I’d been hearing about the new and improved Spotlight since the introduction of macOS 26/Tahoe, and this episode of Upgrade inspired me to give it another shot. You may recall that as the episode in which Jason and Myke reviewed the results of Jason’s annual Apple Report Card, and they talked about Spotlight as being one of Tahoe’s significant improvements.

So I turned off LaunchBar and began using Spotlight exclusively. It sucked. I hung on that long only because I kept thinking, “Surely it’s going to improve as it learns my habits.” It didn’t. It was unbearably slow when I started using it, and it was still unbearably slow when I finally decided to pull the plug on it yesterday.

How slow? Finding files and folders—even files and folders that I had been searching for and opening for a few days—typically took several seconds (yes, s…e…v…e…r…a…l seconds). Finding and launching apps with Spotlight was much faster, but even that had a noticeable delay. You may remember that Quicksilver was so-named because it was quick—so are LaunchBar and Alfred. Spotlight, despite being a system feature, is not.

So I’m back to LaunchBar. A new release came out during my Spotlight experiment, which was heartening, as I’ve been worried about LaunchBar’s continued viability as a product. I upgraded and reindexed my system (which took only a few seconds), and it feels like I’m back at my Mac again.

One last thing: If you feel compelled to tell me the Good News about Raycast, please restrain yourself. I know about Raycast, and I know that it seems like just the thing for someone who does as much scripting and automation as I do. And maybe it is. But it seems like a project I don’t want to take on right now. If I change my mind, I’ll let you know.

Update 18 Apr 2026 1:07 PM

It’s possible that Spotlight would work at a reasonable speed if I reindexed it. Myke Hurley has mentioned (not in the above-linked episode, but in others) that he’s needed to reindex Spotlight a couple of times. If that’s what I need to do to get it to work properly, count me out. Yes, I did reindex LaunchBar yesterday, but that was because it hadn’t run in seven weeks, and I wanted it up to date right away—I’ve never had to reindex it regularly.

-

I’ve never tried the reconstituted Quicksilver. It may be fine, but I just don’t think it has enough momentum behind it. ↩